by Adam Becker (science communicator in residence, Spring 2023)

Think about the last time you faced a problem you couldn’t solve. Say it was something practical, something that seemed small — a leaky faucet, for example. There’s an exposed screw right on the top of the faucet handle, so you figure all you need to do is turn the faucet off as far as it will go, and then tighten that screw. So you try that, and it doesn’t work. You get a different screwdriver, a better fit for the screw, but you can’t get it to budge. You grab a wrench and take apart the faucet handle, and that doesn’t help much either — it turns out there’s far more under there than you’d expected, and you can barely put it back together again. You’re about to give up and call a plumber, but first you want to see whether you’re close. Maybe it really is easy to fix the problem, and you just need to know where to look. Or maybe it’s far more difficult than you think. So now you’re trying to solve a new problem, a meta-problem: instead of fixing the leaky faucet, you’re trying to figure out how hard it will be to fix the leaky faucet. You turn to the internet, and find that there are many different kinds of faucets and sinks, some of which are practically indistinguishable, and there are different reasons they can leak, unique to each type of sink. Simply determining the difficulty of fixing your leaky faucet is itself turning out to be more difficult than you expected.

Theoretical computer scientists have been facing their own version of this problem for decades. Many of the problems they ask are about complexity: How hard must a computer (really, an idealized version of one) work to perform a particular task? One such task, famous in the annals of both mathematics and computer science — theoretical computer science is where the two disciplines meet — is the traveling salesperson problem. Imagine a traveling salesperson, going from city to city. Starting from her home, she has a list of cities she must visit, and a map with the distances between those cities. Her budget limits the total distance she can travel to a certain maximum, so she’d like to find a route shorter than that maximum distance that allows her to visit each of the cities on her list, returning to her home city at the end. Given her list of cities and her budget, does such a route exist?

There is no known method for solving this problem quickly in a general way — a method that would work for all possible budgets and lists of cities that the salesperson might have. There are ways of doing it, but all of them take a large number of calculations relative to the number of cities on the list, and thus take a great deal of time, especially as the number of cities increases. In fact, the shortest such guaranteed method known for solving the traveling salesperson problem takes, in general, an exponentially larger amount of time as the number of cities on the list increases, because there’s no known way to do this that’s significantly faster than brute-forcing the problem by checking every possible route. Compare this with verifying a solution to the traveling salesperson problem: that’s easy. All you have to do is confirm that the solution does in fact visit every city once, and that the total distance of the route is shorter than the maximum allowed by the salesperson’s budget.

This property of the traveling salesperson problem — it seems like it can be solved in general only by a lengthy brute-force method, but it’s fast to verify a given solution — places it into a class of “computational complexity” known as NP. (This stands for “nondeterministic polynomial time,” and it’s not particularly important to understand that name in order to understand what’s going on here.) Compare this with a problem like determining whether the last entry on a list of numbers is the largest, for which there are known (and straightforward) methods that don’t scale exponentially with the length of the list. Such problems, which can be solved and verified quickly, are in a complexity class called P, a special subset of NP.

On the face of it, NP and P seem to be different; the traveling salesperson problem (TSP) can’t be solved quickly by any known method. But the trouble, for computer scientists, begins with those words “known method.” While nobody knows a fast way of solving a problem like the traveling salesperson problem, that doesn’t mean no such method exists. Finding such a method would show that TSP actually belongs in P. In fact, it would show more than that, because computer scientists have proved that TSP is not just a member of NP — it is NP-complete: if there were an efficient solution to TSP, it could be adapted to solve every other problem in NP quickly too. Therefore, a fast solution to TSP wouldn’t just show that TSP is part of P — it would show that every problem in NP is a member of P, making P and NP the same complexity class. But if instead someone were to prove that there is no universally fast method for solving TSP, this would mean that TSP and many other similarly difficult problems in NP aren’t in P, meaning that P and NP are not the same complexity class.

So which is it? Does P = NP or not? Nobody knows. This question has haunted theoretical computer science for well over half a century, resisting all attempts at solution — or even reasonable progress. And like the leaky faucet, this difficulty has prompted computer scientists to think about a meta-problem: What’s the complexity of proving whether P = NP? How intricate must a proof that resolves this question be? Is there a trick to it — is it the kind of thing that looks simple in retrospect? Or is it the sort of proof that requires a great deal of intricate mathematics and novel proof techniques? This is meta-complexity: evaluating the complexity of questions that are themselves about computational complexity. The Simons Institute held a research program on the topic in Spring 2023.

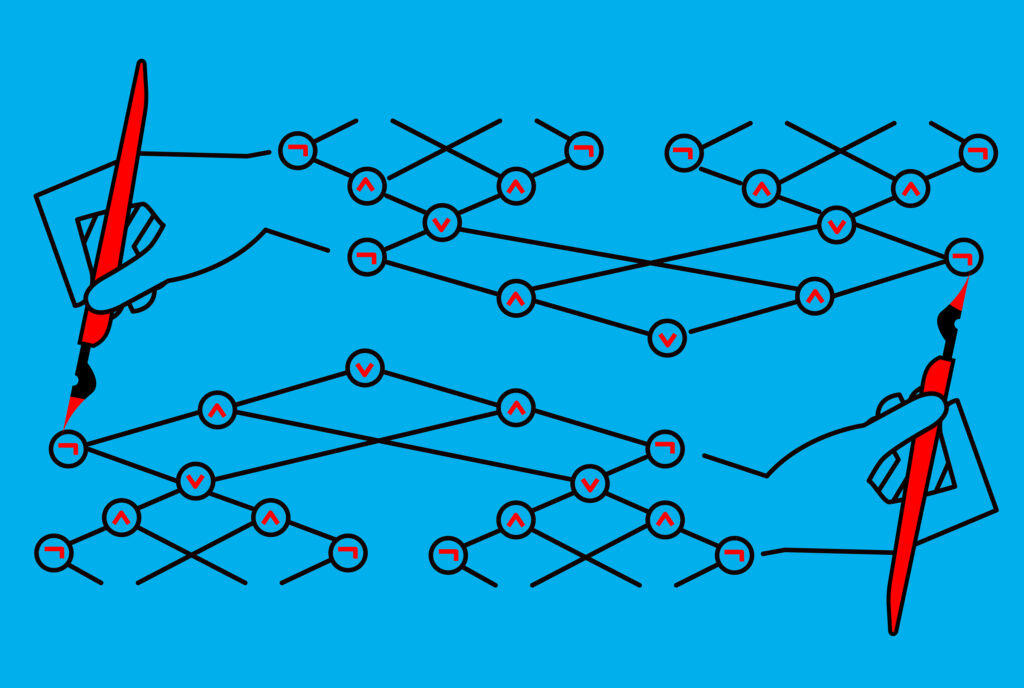

Meta-complexity isn’t a new idea. Starting in the late 1940s, pioneers in early computer science on both sides of the Iron Curtain were considering an optimization problem, like TSP, but about idealized computers rather than an idealized salesperson. Specifically, they were thinking about small computers of unknown architecture: black boxes that can be studied only through their behavior. Say you have one of these computers, a little black box that lets you input any whole number you like, up to a certain size. When you do, the box gives you either a 0 or a 1 as output. You want to know what’s in the box, so you start going through inputs and outputs systematically, making a table. 0 gives you 1, 1 gives you 0, 2 gives you 1, and so on. The question these early computer scientists were asking was this: Given a particular table of inputs and outputs, what is the least complex architecture that could be inside this black box doing the computing? If you have a “circuit size budget” — like the traveling salesperson’s travel budget — is there a circuit small enough to fit within your budget that could do what the black box does? These questions became known as the minimum circuit size problem (MCSP). Once these questions had been asked, the next one was: What’s the computational complexity of MCSP itself?

This is another form of meta-complexity: a question about the complexity of a problem that is itself about complexity. And this time, there’s a known answer. MCSP (at least the second version of it, asking about circuits smaller than a certain size) is in NP: it’s easy to confirm that a solution is correct, but there doesn’t seem to be a general solution to the problem other than a brute-force search. But is MCSP NP-complete? Is it as hard as the hardest problems in NP, like TSP is, and would a fast way of solving it — like solving TSP — mean proving all problems in NP are actually in P? MCSP “seems to really capture that kind of flavor of an unstructured search space — circuits that don’t necessarily have much to do with each other — so shouldn’t you be able to show that not only is MCSP contained in NP, but it is one of the hardest problems in NP, it is NP-complete?” said Marco Carmosino, research scientist at IBM, last year. “It is 2023 and we still have not proved that MCSP is NP-[complete].”

These two forms of meta-complexity — questions about the difficulty of proofs about complexity classes, and questions about the complexity of problems about complexity — are linked. The first kind of meta-complexity, about the difficulty of proofs about complexity, has roots stretching as far back as the work of legendary logician Kurt Gödel in the mid-20th century, as well as the origins of modern logic and meta-mathematics around the turn of the 20th century, in the generations immediately preceding Gödel. But starting in the 1970s — not long after the first formal introduction of the P = NP question — and continuing ever since, computer scientists started proving rigorous results about why such problems were difficult to solve. These “barrier” proofs showed that many common proof techniques used in computer science simply could not solve questions like P vs. NP. Going back to the analogy of fixing the leaky faucet, these barrier proofs would be like finding out that using a screwdriver or a wrench at all would doom you to failure.

But while barrier proofs could be seen as disheartening, they were also informative: they told computer scientists that they would be wasting their time to attempt a solution using those tools, and that any real solution to the problem must lie elsewhere. As work continued over the following decades, computer scientists found further barriers and proofs. But recently, examining the structure of those barriers has led to a burst of activity in meta-complexity, with new results making progress toward old problems like whether P = NP, as well as revealing unexpected connections within the field. Computer scientists working in meta-complexity have not only shown links between various measures of complexity, but have also found deep connections between their own subfield and other areas of computer science, like learning theory and cryptography. “The scope and centrality of meta-complexity has dramatically expanded over the past 10-ish years or so, as breakthroughs show that cryptographic primitives and learning primitives end up being not just reducible to but equivalent to solutions to meta-computational problems. And that attracts attention — that attracts excitement. And the proof techniques are very cool,” said Carmosino, who was a research fellow with the Institute’s Meta-Complexity program. “And so it’s very rich, what’s going on right now. A dense network of connections is all jelling together all at once. It’s very exciting. … We can use [meta-complexity] as a tool to migrate techniques between these disparate areas of theoretical computer science and show that, really, the field is more unified than it looks.” And with the perspective afforded by meta-complexity, perhaps P vs. NP — the leaky faucet that has been dripping away in the heart of computer science for half a century — will, someday, yield to a solution.